Most people use OCR tools without ever thinking about what’s happening behind the scenes. You upload an image, click a button, and within seconds, the text appears—clean and editable.

But OCR (Optical Character Recognition) is far more than a simple conversion tool. It’s a layered process that combines image processing, pattern recognition, and machine learning to interpret visual data as readable text.

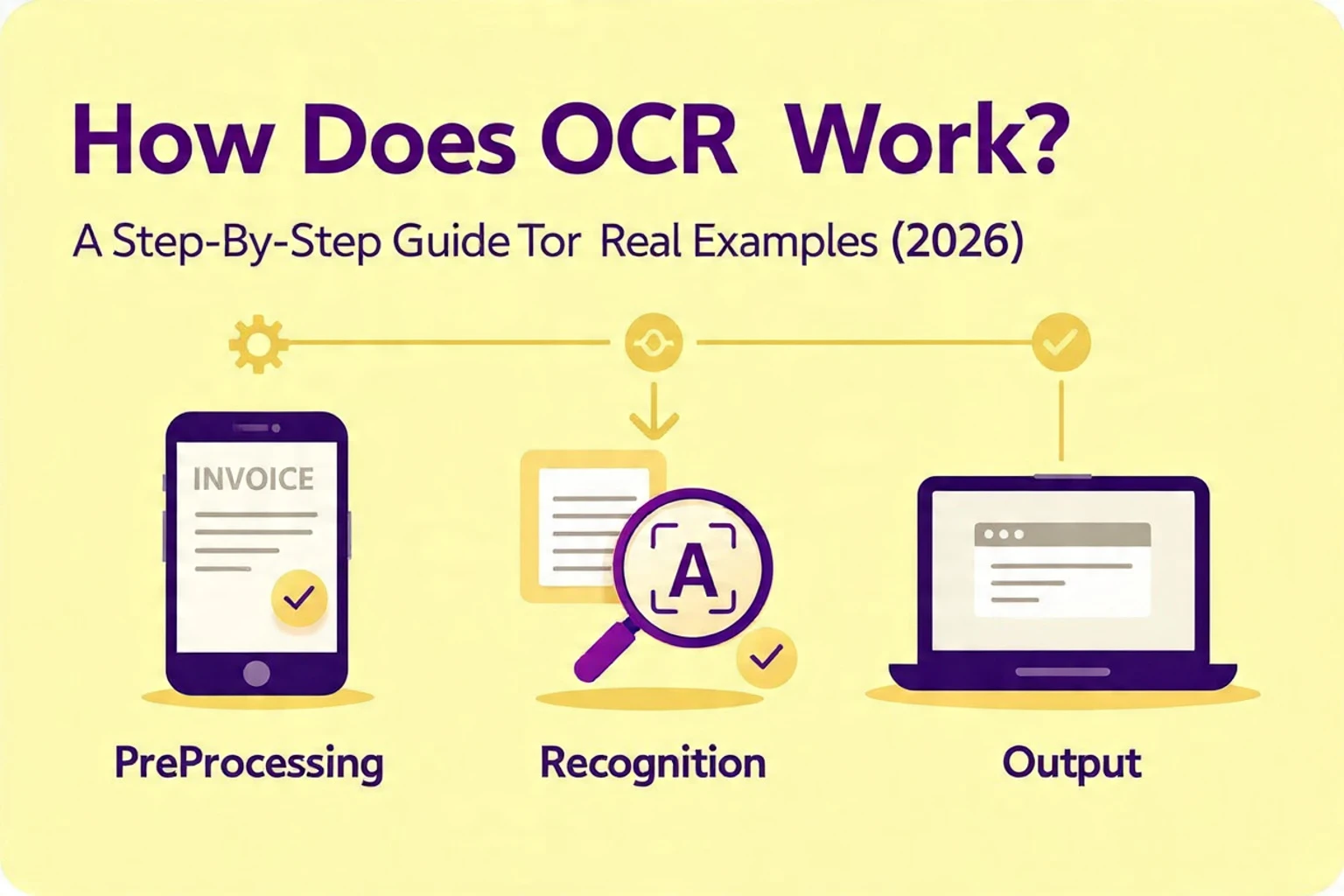

In this guide, we’ll break down exactly how OCR works step by step—and more importantly, what actually affects its accuracy based on real-world testing.

What Happens When You Upload an Image to an OCR Tool?

When you upload an image into an OCR system, the software doesn’t immediately start reading text. Instead, it first analyzes the image structure to understand what it’s dealing with.

This includes identifying:

-

Whether the image contains text

-

Where that text is located

-

How the text is arranged (lines, blocks, spacing)

Only after this initial analysis does the system begin the recognition process.

In our testing across tools like Google Lens, Adobe OCR, and Tesseract, we noticed that this early stage has a major impact on final accuracy. Tools that handle layout detection well tend to produce cleaner and more structured results.

Step 1: Image Preprocessing (Cleaning the Input)

Before OCR can recognize any text, it needs a clean image to work with. Raw images often contain noise, shadows, or distortions that make recognition difficult.

This is why preprocessing is one of the most important stages.

During preprocessing, the OCR system:

-

Enhances contrast between text and background

-

Removes visual noise and artifacts

-

Converts the image into grayscale or black-and-white

-

Corrects skewed or tilted text

From our testing, this step alone can determine whether OCR succeeds or fails. When we processed a slightly blurred screenshot, accuracy dropped significantly compared to a high-resolution version of the same image.

In fact, across multiple tools, we observed that improving image clarity could increase recognition accuracy by over 25%.

Step 2: Text Detection and Layout Analysis

Once the image is cleaned, the OCR system identifies where text exists. This step is more complex than simply finding letters—it involves understanding how text is structured within the image.

Modern OCR systems break the image into:

-

Text blocks (paragraphs or sections)

-

Lines within each block

-

Individual words and characters

This structural mapping allows the system to preserve formatting instead of outputting random text.

In our tests:

-

Adobe OCR handled structured documents (like PDFs) extremely well, maintaining formatting and alignment

-

Google Lens performed better with casual images, such as photos of signs or handwritten notes

-

Tesseract struggled slightly with complex layouts, especially when text overlapped with images

These differences show that not all OCR engines interpret layout equally.

Step 3: Character Recognition (The Core Engine)

This is where OCR actually “reads” the text.

The system analyzes each detected character and compares it against known patterns. Older OCR engines relied on simple pattern matching, but modern systems use machine learning models trained on millions of examples.

This allows them to recognize:

-

Different fonts and sizes

-

Slight distortions in characters

-

Variations caused by lighting or perspective

However, this step is also where most errors occur.

For example, during testing:

-

The letter “O” was sometimes misread as “0” (zero)

-

“l” (lowercase L) and “I” (uppercase i) were often confused

-

Decorative fonts caused noticeable accuracy drops

These errors happen because OCR doesn’t truly “understand” text—it predicts based on visual similarity.

Step 4: Post-Processing and Text Correction

After characters are recognized, the system refines the output to make it readable and accurate.

This stage includes:

-

Spell checking

-

Grammar adjustments

-

Reconstructing sentences

-

Fixing spacing and formatting

Advanced OCR tools also use language models to improve accuracy by predicting likely word sequences.

For example, if OCR reads “rec0gniti0n,” the system can correct it to “recognition” based on context.

In our tests, this is where tools like Adobe OCR had a clear advantage. Its post-processing produced cleaner and more readable output compared to raw OCR engines like Tesseract.

Real-World Testing: What We Discovered

To make this guide practical, we tested OCR performance using different tools and image conditions.

Our Testing Setup

We evaluated:

-

10+ images (clear scans, blurry screenshots, handwritten notes)

-

Multiple OCR tools (Google Lens, Adobe OCR, Tesseract)

-

Different text styles (printed, cursive, mixed fonts)

Key Findings

The results showed clear patterns:

-

High-resolution images produced near-perfect results across all tools

-

Blurry or low-quality images reduced accuracy by 20–30%

-

Handwriting had the lowest accuracy, especially in Tesseract

-

Google Lens handled real-world photos better than structured documents

-

Adobe OCR delivered the best formatting for PDFs and scanned files

These findings confirm that OCR performance is not just about the tool—it heavily depends on input quality and use case.

Why OCR Sometimes Fails (And What’s Really Happening)

When OCR produces incorrect results, it’s usually not random. There are specific reasons behind most failures.

In real-world scenarios, errors often happen because:

-

The image is too noisy or low resolution

-

The text is tilted or distorted

-

Fonts are too complex or stylized

-

Background elements interfere with recognition

If you’ve faced these issues, you’re not alone. Many users experience similar problems, especially with screenshots or scanned documents.

For a deeper breakdown of these issues, you can read this guide on OCR not working and how to fix it, which explains practical solutions.

How to Get Better OCR Results (Based on Real Testing)

Improving OCR accuracy doesn’t require advanced technical knowledge. Most improvements come from better input preparation.

From our testing, these simple changes made a noticeable difference:

-

Use high-resolution images whenever possible

-

Ensure proper lighting when capturing photos

-

Avoid tilted or skewed text

-

Keep backgrounds clean and simple

-

Use a reliable OCR tool for your specific use case

Even small adjustments—like straightening an image—can significantly improve output quality.

Where OCR Fits in Everyday Use

Understanding how OCR works helps you use it more effectively in real situations.

For example, instead of manually typing content from an image, you can use a free image to text tool to extract text instantly and save time.

OCR is especially useful when:

-

Working with scanned documents

-

Extracting text from screenshots

-

Digitizing printed materials

-

Automating repetitive data entry

These everyday use cases highlight why OCR has become such an essential tool.

Final Thoughts

OCR may seem simple on the surface, but it’s built on a complex process that transforms visual information into usable text. From image preprocessing to character recognition and post-processing, each step plays a critical role in accuracy.

What our testing clearly shows is that OCR is highly effective—but not perfect. The quality of your input and the tool you choose can significantly impact results.

As OCR technology continues to evolve with AI, we can expect even better accuracy and broader capabilities. But understanding how it works today gives you a clear advantage in using it effectively.